If you’ve been using EC2 for anything serious then you have some code on your instances that requires your AWS credentials. I’m talking about code that does things like this:

- Attach an EBS volume

- Download your application from a non-public location in S3

- Send and receive SQS messages

- Query or update SimpleDB

All these actions require your credentials. How do you get the credentials onto the instance in the first place? How can you store them securely once they’re there? First let’s examine the issues involved in securing your keys, and then we’ll explore the available options for doing so.

Note: With the release of IAM Roles, you can now pass access credentials to an EC2 instance via the metadata automatically. I wrote a chef recipe to help you set up the new AWS Command Line tools, which used IAM Role credentials automatically.

Potential Vulnerabilities in Transferring and Storing Your Credentials

There are a number of vulnerabilities that should be considered when trying to protect a secret. I’m going to ignore the ones that result from obviously foolish practice, such as transferring secrets unencrypted.

- Root: root can get at any file on an instance and can see into any process’s memory. If an attacker gains root access to your instance, and your instance can somehow know the secret, your secret is as good as compromised.

- Privilege escalation: User accounts can exploit vulnerabilities in installed applications or in the kernel (whose latest privilege escalation vulnerability was patched in new Amazon Kernel Images on 28 August 2009) to gain root access.

- User-data: Any user account able to open a socket on an EC2 instance can see the user-data by getting the URL

http://169.254.169.254/latest/user-data. This is exploitable if a web application running in EC2 does not validate input before visiting a user-supplied URL. Accessing the user-data URL is particularly problematic if you use the user-data to pass in the secret unencrypted into the instance – one quickwget(orcurl) command by any user and your secret is compromised. And, there is no way to clear the user-data – once it is set at launch time, it is visible for the entire life of the instance. - Repeatability: HTTPS URLs transport their content securely, but anyone who has the URL can get the content. In other words, there is no authentication on HTTPS URLs. If you specify an HTTPS URL pointing to your secret it is safe in transit but not safe from anyone who discovers the URL.

Benefits Offered by Transfer and Storage Methods

Each transfer and storage method offers a different set of benefits. Here are the benefits against which I evaluate the various methods presented below:

- Easy to do. It’s easy to create a file in an AMI, or in S3. It’s slightly more complicated to encrypt it. But, you should have a script to automate the provision of new credentials, so all of the methods are graded as “easy to do”.

- Possible to change (now). Once an instance has launched, can the credentials it uses be changed?

- Possible to change (future). Is it possible to change the credentials that will be used by instances launched in the future? All methods provide this benefit but some make it more difficult to achieve than others, for example instances launched via Auto Scaling may require the Launch Configuration to be updated.

How to Put AWS Credentials on an EC2 Instance

With the above vulnerabilities and benefits in mind let’s look at different ways of getting your credentials onto the instance and the consequences of each approach.

Mitch Garnaat has a great set of articles about the AWS credentials. Part 1 explores what each credential is used for, and part 2 presents some methods of getting them onto an instance, the risks involved in leaving them there, and a strategy to mitigate the risk of them being compromised. A summary of part 1: keep all your credentials secret, like you keep your bank account info secret, because they are – literally – the keys to your AWS kingdom.

As discussed in part 2 of Mitch’s article, there are a number of methods to get the credentials (or indeed, any secret) onto an instance. Here are two, evaluated in light of the benefits presented above:

1. Burn the secret into the AMI

Pros:

- Easy to do.

Cons:

- Not possible to change (now) easily. Requires SSHing into the instance, updating the secret, and forcing all applications to re-read it.

- Not possible to change (future) easily. Requires bundling a new AMI.

- The secret can be mistakenly bundled into the image when making derived AMIs.

Vulnerabilities:

- root, privilege escalation.

2. Pass the secret in the user-data

Pros:

- Easy to do. Putting the secret into the user-data must be integrated into the launch procedure.

- Possible to change (future). Simply launch new instances with updated user-data. With Auto Scaling, create a new Launch Configuration with the updated user-data.

Cons:

- Not possible to change (now). User-data cannot be changed once an instance is launched.

Vulnerabilities:

- user-data, root, privilege escalation.

Here are some additional methods to transfer a secret to an instance, not mentioned in the article:

3. Put the secret in a public URL

The URL can be on a website you control or in S3. It’s insecure and foolish to keep secrets in a publicly accessible URL. Please don’t do this, I had to mention it just to be comprehensive.

Pros:

- Easy to do.

- Possible to change (now). Simply update the content at that URL. Any processes on the instance that read the secret each time will see the new value once it is updated.

- Possible to change (future).

Cons:

- Completely insecure. Any attacker between the endpoint and the EC2 boundary can see the packets and discover the URL, revealing the secret.

Vulnerabilities:

- repeatability, root, privilege escalation.

4. Put the secret in a private S3 object and provide the object’s path

To get content from a private S3 object you need the secret access key in order to authenticate with S3. The question then becomes “how to put the secret access key on the instance”, which you need to do via one of the other methods.

Pros:

- Easy to do.

- Possible to change (now). Simply update the content at that URL. Any processes on the instance that read the secret each time will see the new value once it is updated.

- Possible to change (future).

Cons:

- Inherits the cons of the method used to transfer the secret access key.

Vulnerabilities:

- root, privilege escalation.

5. Put the secret in a private S3 object and provide a signed HTTPS S3 URL

The signed URL must be created before launching the instance and specified somewhere that the instance can access – typically in the user-data. The signed URL expires after some time, limiting the window of opportunity for an attacker to access the URL. The URL should be HTTPS so that the secret cannot be sniffed in transit.

Pros:

- Easy to do. The S3 URL signing must be integrated into the launch procedure.

- Possible to change (now). Simply update the content at that URL. Any processes on the instance that read the secret each time will see the new value once it is updated.

- Possible to change (future). In order to integrate with Auto Scaling you would need to (automatically) update the Auto Scaling Group’s Launch Configuration to provide an updated signed URL for the user-data before the previously specified signed URL expires.

Cons:

- The secret must be cached on the instance. Once the signed URL expires the secret cannot be fetched from S3 anymore, so it must be stored on the instance somewhere. This may make the secret liable to be burned into derived AMIs.

Vulnerabilities:

- repeatability (until the signed URL expires), root, privilege escalation.

6. Put the secret on the instance from an outside source, via SCP or SSH

This method involves an outside client – perhaps your local computer, or a management node – whose job it is to put the secret onto the newly-launched instance. The management node must have the private key with which the instance was launched, and must know the secret in order to transfer it. This approach can also be automated, by having a process on the management node poll every minute or so for newly-launched instances.

Pros:

- Easy to do. OK, not “easy” because it requires an outside management node, but it’s doable.

- Possible to change (now). Have the management node put the updated secret onto the instance.

- Possible to change (future). Simply put a new secret onto the management node.

Cons:

- The secret must be cached somewhere on the instance because it cannot be “pulled” from the management node when needed. This may make the secret liable to be burned into derived AMIs.

Vulnerabilities:

- root, privilege escalation.

The above methods can be used to transfer the credentials – or any secret – to an EC2 instance.

Instead of transferring the secret directly, you can transfer an encrypted secret. In that case, you’d need to provide a decryption key also – and you’d use one of the above methods to do that. The overall security of the secret would be influenced by the combination of methods used to transfer the encrypted secret and the decryption key. For example, if you encrypt the secret and pass it in the user-data, providing the decryption key in a file burned into the AMI, the secret is vulnerable to anyone with access to both user-data and the file containing the decryption key. Also, if you encrypt your credentials then changing the encryption key requires changing two items: the encryption key and the encrypted credentials. Therefore, changing the encryption key can only be as easy as changing the credentials themselves.

How to Keep AWS Credentials on an EC2 Instance

Once your credentials are on the instance, how do you keep them there securely?

First off, let’s remember that in an environment out of your control, such as EC2, you have no guarantees of security. Anything processed by the CPU or put into memory is vulnerable to bugs in the hypervisor (the virtualization provider) or to malicious AWS personnel (though the AWS Security White Paper goes to great lengths to explain the internal procedures and controls they have implemented to mitigate that possibility) or to legal search and seizure. What this means is that you should only run applications in EC2 for which the risk of secrets being exposed via these vulnerabilities is acceptable. This is true of all applications and data that you allow to leave your premises. But this article is about the security of the AWS credentials, which control the access to your AWS resources. It is perfectly acceptable to ignore the risk of abuse by AWS personnel exposing your credentials because AWS folks can manipulate your account resources without needing your credentials! In short, if you are willing to use AWS then you trust Amazon with your credentials.

There are three ways to store information on a running machine: on disk, in memory, and not at all.

1. Keeping a secret on disk

The secret is stored in a file on disk, with the appropriate permissions set on the file. The secret survives a reboot intact, which can be a pro or a con: it’s a good thing if you want the instance to be able to remain in service through a reboot; it’s a bad thing if you’re trying to hide the location of the secret from an attacker, because the reboot process contains the script to retrieve and cache the secret, revealing its cached location. You can work around this by altering the script that retrieves the secret, after it does its work, to remove traces of the secret’s location. But applications will still need to access the secret somehow, so it remains vulnerable.

Pros:

- Easily accessible by applications on the instance.

Cons:

- Visible to any process with the proper permissions.

- Easy to forget when bundling an AMI of the instance.

Vulnerabilities:

- root, privilege escalation.

2. Keeping the secret in memory

The secret is stored as a file on a ramdisk. (There are other memory-based methods, too.) The main difference between storing the secret in memory and on the filesystem is that memory does not survive a reboot. If you remove the traces of retrieving the secret and storing it from the startup scripts after they run during the first boot, the secret will only exist in memory. This can make it more difficult for an attacker to discover the secret, but it does not add any additional security.

Pros:

- Easily accessible by applications on the instance.

Cons:

- Visible to any process with the proper permissions.

Vulnerabilities:

- root, privilege escalation.

3. Do not store the secret; retrieve it each time it is needed

This method requires your applications to support the chosen transfer method.

Pros:

- Secret is never stored on the instance.

Cons:

- Requires more time because the secret must be fetched each time it is needed.

- Cannot be used with signed S3 URLs. These URLs expire after some time and the secret will no longer be accessible. If the URL does not expire in a reasonable amount of time then it is as insecure as a public URL.

- Cannot be used with externally-transferred (via SSH or SCP) secrets because the secret cannot be pulled from the management node. Any protocol that tries to pull the secret from the management node can be also be used by an attacker to request the secret.

Vulnerabilities:

- root, privilege escalation.

Choosing a Method to Transfer and Store Your Credentials

The above two sections explore some options for transferring and storing a secret on an EC2 instance. If the secret is guarded by another key – such as an encryption key or an S3 secret access key – then this key must also be kept secret and transferred and stored using one of those same methods. Let’s put all this together into some tables presenting the viable options.

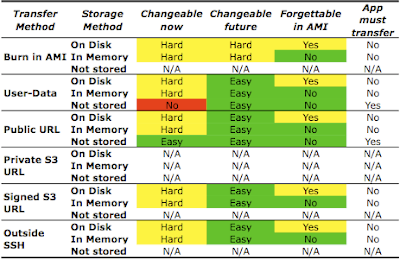

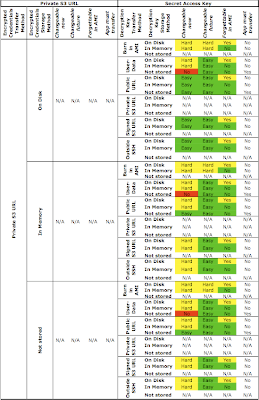

Unencrypted Credentials

Here is a summary table evaluating the transfer and storage of unencrypted credentials using different combinations of methods:

Some notes on the above table:

- Methods making it “hard” to change credentials are highlighted in yellow because, through scripting, the difficulty can be minimized. Similarly, the risk of forgetting credentials in an AMI can be minimized by scripting the AMI creation process and choosing a location for the credential file that is excluded from the AMI by the script.

- While you can transfer credentials using a private S3 URL, you still need to provide the secret access key in order to access that private S3 URL. This secret access key must also be transferred and stored on the instance, so the private S3 URL is not by itself usable. See below for an analysis of using a private S3 URL to transfer credentials. Therefore the Private S3 URL entries are marked as N/A.

- You can burn credentials into an AMI and store them in memory. The startup process can remove them from the filesystem and place them in memory. The startup process should then remove all traces from the startup scripts mentioning the key’s location in memory, in order to make discovery more difficult for an attacker with access to the startup scripts.

- Credentials burned into the AMI cannot be “not stored”. They can be erased from the filesystem, but must be stored somewhere in order to be usable by applications. Therefore these entries are marked as N/A.

- Credentials transferred via a signed S3 URL cannot be “not stored” because the URL expires and, once that happens, is no longer able to provide the credentials. Thus, these entries are marked N/A.

- Credentials “pushed” onto the instance from an outside source, such as SSH, cannot be “not stored” because they must be accessible to applications on the instance. These entries are marked N/A.

A glance at the above table shows that it is, overall, not difficult to manage unencrypted credentials via any of the methods. Remember: don’t use the Public URL method, it’s completely unsecure.

Bottom line: If you don’t care about keeping your credentials encrypted then pass a signed S3 HTTPS URL in the user-data. The startup scripts of the instance should retrieve the credentials from this URL and store them in a file with appropriate permissions (or in a ramdisk if you don’t want them to remain through a reboot), then the startup scripts should remove their own commands for getting and storing the credentials. Applications should read the credentials from the file (or directly from the signed URL if you don’t care that it will stop working after it expires).

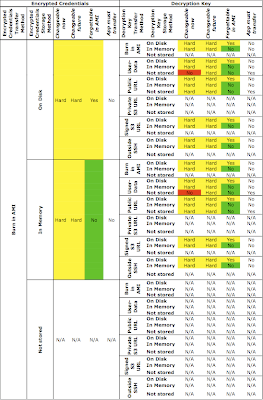

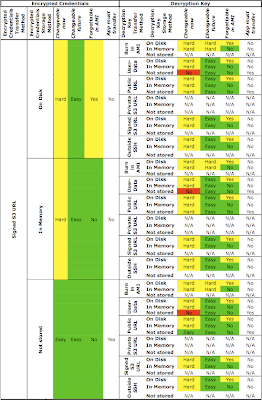

Encrypted Credentials

We discussed 6 different ways of transferring credentials and 3 different ways of storing them. A transfer method and a storage method must be used for the encrypted credentials and for the decryption key. That gives us 36 combinations of transfer methods, and 9 combinations of storage methods, for a grand total of 324 choices.

Here are the first 54, summarizing the options when you choose to burn the encrypted credentials into the AMI:

As (I hope!) you can see, all combinations that involve burning encrypted credentials into the AMI make it hard (or impossible) to change the credentials or the encryption key, both on running instances and for future ones.

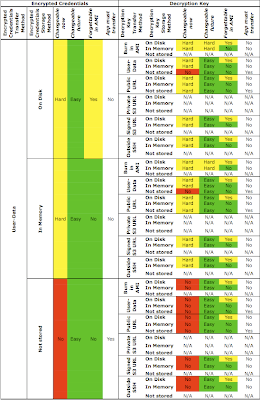

Here are the next set, summarizing the options when you choose to pass encrypted credentials via the user-data:

Passing encrypted credentials in the user-data requires the decryption key to be transferred also. It’s pointless from a security perspective to pass the decryption key together with the encrypted credentials in the user-data. The most flexible option in the above table is to pass the decryption key via a signed S3 HTTPS URL (specified in the user-data, or specified at a public URL burned into the AMI) with a relatively short expiry time (say, 4 minutes) allowing enough time for the instance to boot and retrieve it.

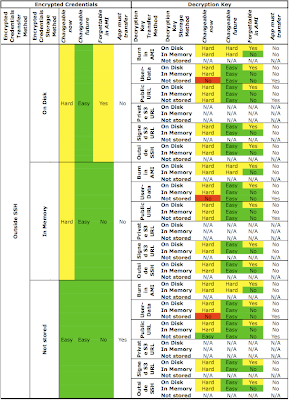

Here is a summary of the combinations when the encrypted credentials are passed via a public URL:

It might be surprising, but passing encrypted credentials via a public URL is actually a viable option. You just need to make sure you send and store the decryption key securely, so send that key via a signed S3 HTTPS URL (specified in the user-data on specified at a public URL burned into the AMI) for maximum flexibility.

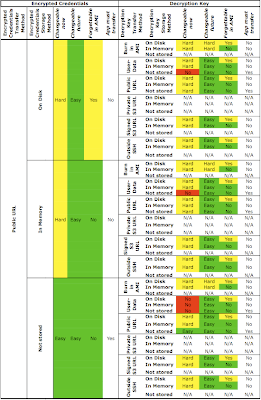

The combinations with passing the encrypted credentials via a private S3 URL are summarized in this table:

As explained earlier, the private S3 URL is not usable by itself because it requires the AWS secret access key. (The access key id is not a secret). The secret access key can be transferred and stored using the combinations of methods shown in the above table.

The most flexible of the options shown in the above table is to pass in the secret access key inside a signed S3 HTTPS URL (which is itself provided in the user-data or at a public URL burned into the AMI).

Almost there…. This next table summarizes the combinations with encrypted credentials passed via a signed S3 HTTPS URL:

The signed S3 HTTPS URL containing the encrypted credentials can be specified in the user-data or specified behind a public URL which is burned into the AMI. The best options for providing the decryption key are via another signed URL or from an external management node via SSH or SCP.

And, the final section of the table summarizing the combinations of using encrypted credentials passed in via SSH or SCP from an outside management node:

The above table summarizing the use of an external management node to place encrypted credentials on the instance shows the same exact results as the previous table (for a signed S3 HTTPS URL). The same flexibility is achieved using either method.

The Bottom Line

Here’s a practical recommendation: if you have code that generates signed S3 HTTPS URLs then pass in two signed URLs into the user-data, one containing the encrypted credentials and the other containing the decryption key. The startup sequence of the AMI should read these two items from their URLs, decrypt the credentials, and store the credentials in a ramdisk file with the minimum permissions necessary to run the applications. The start scripts should then remove all traces of the procedure (beginning with “read the user-data URL” and ending with “remove all traces of the procedure”) from themselves.

If you don’t have code to generate signed S3 URLs then burn the encrypted credentials into the AMI and pass the decryption key via the user-data. As above, the startup sequence should decrypt the credentials, store them in a ramdisk, and destroy all traces of the raw ingredients and the process itself.

This article is an informal review of the benefits and vulnerabilities offered by different methods of transferring credentials to and storing credentials on an EC2 instance. In a future article I will present scripts to automate the procedures described. In the meantime, please leave your feedback in the comments.

35 replies on “How to Keep Your AWS Credentials on an EC2 Instance Securely”

Another option you can add to the matrix is using an additional authenticate only aws user.

Create a new aws user, 'wimpy', but do not sign up for any services, and do not provide a credit card.

Although the new user cannot provisoin any aws resources, it does get an account id and access keys. Private s3 buckets and objects can be shared with this wimpy user. The wimpy user credentials can be provided in the userdata (or similar options mentioned) allowing boot scripts to retreive authenticated objects from s3, while not exposing the keys to the entire AWS kingdom.

A benefit of this approach, as compared to time expireing s3 urls, is that it can be used with autoscaling.

This method will not however give access to ec2-api commands such as ebs-attach-volume etc. If (and only if) access to these commands is required from the instance a separate monitor instance that does have the primary AWS keys can be used to proxy ec2 commands. The monitor host can listen for requests on the 10.* network from authenticated security groups, and run whatever additional verification is required before then executing the requested ec2-command. This reduces the exposure of your secret to a single instance.

@Michael Fairchild,

Mitch Garnaat suggests a similar two-credential method in part 2 of his article (linked above). He calls them "Secret Credentials" ('wimpy') and "Double Secret Credentials" (the real ones).

The "monitor host" idea is similar to one I've been kicking around lately. My comment to Mitch's blog post outlines the idea, and some more detail is in the comments to the following blog:

http://elastic-security.com/2009/08/20/ec2-design-patterns-1-externalconsole/

Given the constraints imposted by Auto Scaling, I think there's a better option than using signed URLs.

Signed URLs have the problem of a hardcoded expiration date, which means you need some external script which is vigilant in continually generating new signed URLs and updating your Auto Scaling Group parameters with the latest URL (which will need to be replaced again in X minutes). This puts robustness in direction opposition to security – the most secure solution mandates a short expiration time, which decreases the robustness of the system by requiring the external script to run frequently, without fail.

There's a better solution that keeps all the security goodness of signed URLs with none of the "signed-URL-generator-must-run-or-Auto-Scaling-will-fail" badness.

Instead of using signed URLs, use *public* URLs with a random path element:

https://s3etc/as0df98a0b980a98a0sd98f0a98sdfa/secret-user-data.txt

The URL is world-readable, but its path is unguessable (just like a signed URL).

Your Auto Scaling Launch config is initially configured with this URL.

Your external script then runs *whenever it wants*, creating a new random path & uploading your data to it, and then updating the Auto Scaling Launch Config to point at the new path. The script then deletes the file from the old path, so all running instances no longer have access to the secret data.

This can be combined with the "wimpy" auth scheme so that the URL doesn't even need to be public, and thus your attacker (if lucky enough to remote-exec on the machine before the URL dies) needs more than just 'curl' to get the secret data.

@6p00e54ee6e7b68834,

That's also a good suggestion. Even better would be to use a single-use URL, which would cease to work after the first retrieval. Then it would not need to be deleted.

AWS could help a lot by providing a way to generate credentials constrained to specific APIs. For example, if I have a machine that simply writes to an SQS queue, then I would generate credentials that only have access to the SendMessage API. If my machine needs to attach EBS volumes and access S3, I would generate credentials with only those permissions. That way in the case of a compromised system or elevation of privilege the damage done is limited to the rights granted in the credentials.

@Gabe,

Absolutely, I agree that fine-grained credentials would help mitigate the risk of compromised credentials.

Good article- any thoughts on using SimpleDB to store credentials?

@Yarin,

SimpleDB requires AWS credentials to access. So it’s equivalent to the option “4. Put the secret in a private S3 object and provide the object’s path” discussed above.

If I generate a presigned URL with Amazon’s SDK to a private S3 object, I can access it in a regular browser but cannot wget/curl it and will give me an Error 403: Forbidden. Do you know why that is?

@Jack,

Try putting the URL you give to wget in quotes. Some of these URLs have special characters that the shell interprets and quoting the URL argument will prevent the shell from interpreting those special characters.

Thank you so much! quotes around the url works like a charm!

@Schlomo,

I have been struggling with the same challenge of getting AWS credentials on an EC2 instance. I came up with roughly the same list of options as you, until tonight, when I thought of another possibility:

when launching an instance, one can specify a snapshot to automatically create an EBS volume from and bind it to a block device. What if you created an EBS volume, put your credentials on it, create a snapshot from that, and then use the mentioned approach? One could use the user-data script (or whatever) to mount the block device and read the credentials. And when an instance terminates, by default the created EBS volume gets deleted (unless you turned it off in the –block-device-mapping option). Make sure the snapshot is private though. And I assume traffic between EC2 and EBS is secure, however I’m not sure of that, but there are many EBS boot images now, so that would be awkward then. Finally, it’s possible to encrypt the EBS volume at filesystem level, and pass the key for it in your user-data script; it doesn’t add security, but prevents someone else from reading the raw storage after having deleted the volume.

That still leaves the ‘How to Keep AWS Credentials on an EC2 Instance’ part, probably you would need to look at SELinux or AppArmor to fix that one, if EC2 even supports that (since EC2 provides the kernels). Also, one could use a read-only filesystem on the EBS volume and have some credentials broker there which takes proper measures to prevent unauthorized retrieving of the credentials; but no idea how to really secure that yet, if it is even possible (since root can do anything, but one could look at the pid of the process requesting the credentials, see which binary it belongs to and check whether the binary is untampered with for example, one could store a list of binaries and sha1sums in the read-only filesystem; but the filesystem itself might be unmounted/recreated/mounted as well).

@Ewout,

Thanks for your comment! I’ve written an article showing how to implement this technique.

[…] SecurelyJuly 19, 2010 · 0 commentsThanks to reader Ewout and his comment on my article How to Keep Your AWS Credentials on an EC2 Instance Securely for suggesting an additional method of transferring credentials: via a snapshot. It’s similar […]

You do realize that once the volume is mounted the credentials are available in clear text for any process with uid 0, right? (think “hackers” here) So what’s the improvement then? Let’s face it, the is *no* secure way to store clear text credentials. And you need them in clear text if you want to use them for AWS.

@never mind,

True, there’s no secure way to secure clear-text credentials.

The AWS Identity and Access Management features can be used to mitigate the risk of credentials being exposed.

[…] a good article here that discusses how to keep AWS credentials security on EC2 instances. This entry was posted in […]

[…] AWS Credentials to be placed on the instance – perhaps in the user-data itself (be sure you understand the security implications of this) as well as a library such as boto or the AWS SDK to perform the AWS API calls.The explicit […]

[…] are a lot of other methods for doing this. Shlomo Swidler compared tradeoffs between different methods for keeping your credentials secure in EC2 […]

[…] Swidler, Schlomo. “How to Keep Your AWS Credentials on an EC2 Instance Securely.” Retrieved December 6, 2012 from http://shlomoswidler.com/2009/08/how-to-keep-your-aws-credentials-on-ec2.html […]

[…] shlomoswidler.com […]

I believe this is now obsolete with the release of IAM Roles?

@Ken Garland,

Yes, you can now use IAM Roles to get AWS credentials supplied to an EC2 instance via the metadata.

IAM roles work out of the box when the secret is AWS credentials, but what if you want to pass other secrets to your app (external services credentials, for instance) ?

Would putting the secrets file on S3, then giving access to this bucket only to the right instances be the best solution nowadays ?

@Renaud,

Great question. You can use IAM Instance Roles to allow the instance to access AWS resources – such as an S3 object. This approach is vulnerable to the first three vulnerabilities (mentioned in the beginning of my article): Root, Privilege Escalation, and User-Data.

If you use AWS OpsWorks then the Custom Stack JSON is a better place to keep secrets – just make sure you use IAM to control which users can access OpsWorks and see those secrets. This approach is vulnerable to the Root and Privilege Escalation vulnerabilities, but not the User-Data vector.

Does the release of AWS KMS make the S3 option more secure?

@Michael Mathews,

No. KMS API calls still need to be authenticated using your credentials, which need to be kept secret.

Thanks Shlomo !

We were doing great so far with “6. Put the secret on the instance from an outside source, via SCP or SSH”, but this has to change as we move to autoscaling : upon booting from a base image, we need instances to set themselves up automatically : pull the latest app code from git (using a private ssh key) + get the secrets (credentials for other services) that we don’t want to store in git, and finally start the app.

In this situation, can you see any added value in putting the secrets on S3, manage access to them using IAM instance roles and retrieving them upon app startup, rather than just putting them directly in user-data ?

@Renaud,

There are two advantages to putting the secrets in an external location and pulling them:

1. It’s easier to change the secrets. When the secrets are in an external location it’s possible to change them and then re-run the code on the instance that fetches them. When the secrets are in user-data, you need to relaunch the instance with updated user-data, or stop the (EBS-backed) instance in order to edit the user-data. In my article above I called this benefit “Possible to change (now)”.

2. It’s easier to integrate with automation frameworks (such as OpsWorks) which may not support user-data or provide “second-class citizen” support for it.

The only disadvantage is that using an external location involves more components, meaning more things that can possibly break. Depending on your reliability requirements, this tradeoff may be acceptable.

[…] How to Keep Your AWS Credentials on an EC2 Instance Securely […]

So I’ve been picking at this problem for a bit, as I want to use clean AMIs, and I have external credentials I need it use.

Basically the use of a proxy server which takes as arguments the requester’s AWS Instance ID, and the file(s) requested. The proxy looks up the requester’s instance ID and internal IP address in the AWS meta data to verify that it is a real requester, checks that the start time is within X minutes, marks the instanceid as blacklisted, and returns the requested file(s).

Since in the AutoScaling situation when the instance is instantiated it will NEVER ask twice anyway, we can safely ban instances after they make their requests.

This allows the userdata to contain requests for priviledged files, but knowing where to get them does nothing for the attacker unless they have the ability to instantiate new instances, at which point you have much bigger problems.

I found this URL after I came up with this method, which verifies this as a somewhat imperfect solution:

http://ogryb.blogspot.com/2013/08/cloud-hsm-part-3-passing-secrets-as.html

@Stuart H,

Glad to hear this works for you.

It’s still possible for instances launched via AutoScaling to ask your service for their credentials again – it depends how the code to do so was written. Reboots and stopping and starting an EBS-backed instance (possibly with a changed boot volume) must be taken into consideration.

One question: How do you get your secrets into the instance that runs the proxy service?

[…] How to Keep AWS Credentials […]

We’ve seen involve loading data up to Amazon Web Services (AWS), since FME Cloud runs on AWS, but these techniques also apply to loading data into other cloud platforms such as Microsoft Azure.

You could provide the credentials via user data, then block any further access to user data with iptables. Something like this (but done properly – this iptables rule would be lost on reboot):

#cloud-config

write_files:

- content: "{{some super secret stuff}}"

path: "/home/god/.ssh/id_rsa.supersecret"

owner: "baby:baby"

permissions: "0600"

runcmd:

- iptables -A INPUT -s 169.254.169.254 -j DROP